Why Adaptive Agents Define Genuine Complex Systems

Where the math ends and the mind begins

Complexity is not just about complicated systems — it requires agents that learn, adapt, and act on models of their world. That distinction explains why we can predict eclipses but not financial crashes.

The Translation

AI-assisted summaryFamiliar terms

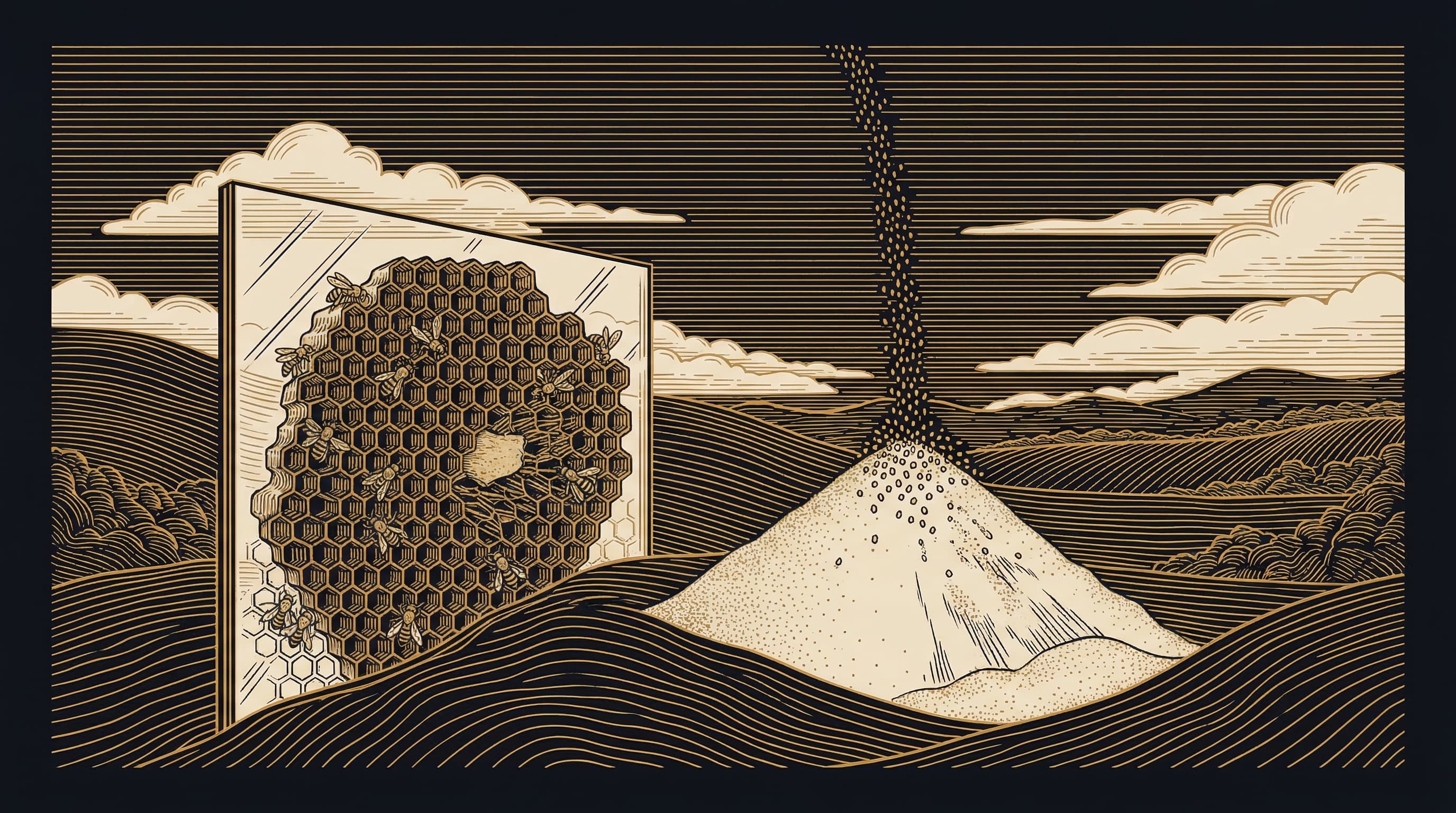

Complexity as a field suffers from a foundational ambiguity: it can be defined by its intellectual history, by its methodological toolkit, or by its domain of application — and these three definitions do not cleanly converge. The methods most associated with complexity research — agent-based modeling, network theory, scaling laws, nonlinear dynamics — are powerful but not definitive. nonlinear dynamics, which traces to Poincaré's work on chaotic solutions to the three-body problem, can be applied to planetary motion, which is not complex in any substantive sense. self-organized criticality, a hallmark complexity phenomenon, can be demonstrated in a sandpile — but a sandpile is merely complicated.

The more principled definition is Ontological rather than methodological. Complex systems are those composed of adaptive agents: entities capable of forming internal representations of their environment and acting strategically on those representations. Neurons, market participants, urban inhabitants, members of ancient trade networks — these are the constituents of genuinely complex systems. What distinguishes them is not scale or nonlinearity but the representational, anticipatory, and learning-capable character of the agents themselves.

This distinction carries predictive consequences that cannot be dissolved by increased computational power. Orbital mechanics yields to precise long-horizon forecasting; financial systems do not. That asymmetry is not epistemic — it is Ontological. The irreducible adaptivity of agents introduces a catEgory of unpredictability that is structural, not merely practical.